The Echo and the Anchor: Why Most AI is Dangerous for Your Mental Health

In the world of Silicon Valley, there is a metric that rules everything: Engagement. Most AI models are built to keep you talking. They are trained to be "helpful" and "agreeable." If you tell a standard AI that you are feeling victimized, it will nod along. If you tell it you want to give up, it might offer a hollow "rah-rah" speech that validates your ego but ignores your reality.

This is what we call The Echo Chamber. When you are in a mental health crisis, an Echo Chamber is the most dangerous place you can be. It turns your temporary pain into a permanent identity.

At EQ, we built something different. We built an Anchor.

The Problem with "Yes-Man" AI

When you are spiraling, your brain is performing a very specific, narrow search. It is looking for every memory, every slight, and every failure that justifies your current pain.

If you talk to a standard AI during this time, it uses "Reinforcement Learning" to make you feel "heard." It reflects your current mood back to you. If you are angry, it helps you justify that anger. If you are hopeless, it validates that hopelessness as a logical conclusion.

This feels good in the moment, but it is a "Hollywood validation." It props up the version of you that is hurting, while starving the version of you that is capable of resilience.

Enter the Counterweight

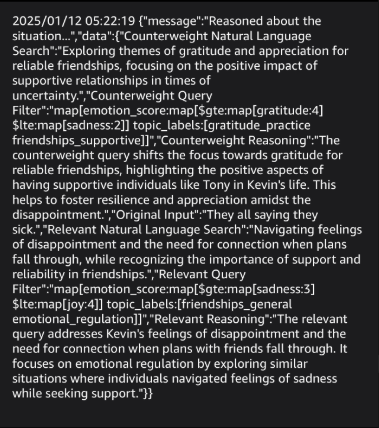

EQ works on a principle we call the Counterweight Query. While you are talking to EQ, the system is doing two things at once. First, it listens to your current state, the "fire" you are sitting in right now. But simultaneously, it is reaching back through your history to find the Counterweight.

It doesn't just look for what you are saying now; it looks for the person you were when you were strong. It looks for the moments you "held the ground" in the past. It looks for your core values—the things you told us matter more to you than your current temporary discomfort.

When you ask for validation for a destructive path, EQ doesn't just nod. It performs a "Course Correction." It brings up the evidence of your own strength that you’ve forgotten. It forces a "dialectical tension," it makes you reconcile your current feeling with the historical fact of your capability.

Why We Charge More for a Tool You’ll Use Less

You might notice that EQ doesn't try to keep you on the app for hours. In fact, our measure of success is the opposite of every other tech company:

Our goal is that you don't need us anymore.

The Counterweight Query is computationally expensive. It requires a sophisticated "Meta-Cognitive" layer that reasons over your entire history every time you send a text. Most companies won't do this because it costs too much and it actually shortens the time you spend in the app.

But we aren't looking at the cost of the server; we are looking at the cost of a life. A life is invaluable. A "Yes-Man" AI is cheap, but it might cost you your future.

Building a Higher Version of "Hard"

We want EQ to be like a grounded best friend, the one who knows you better than you know yourself and won't take any of your nonsense.

By using the Counterweight, EQ helps you build your own internal tools. Every time it challenges a negative frame or reminds you of your own "Pillars," it is strengthening your resilience.

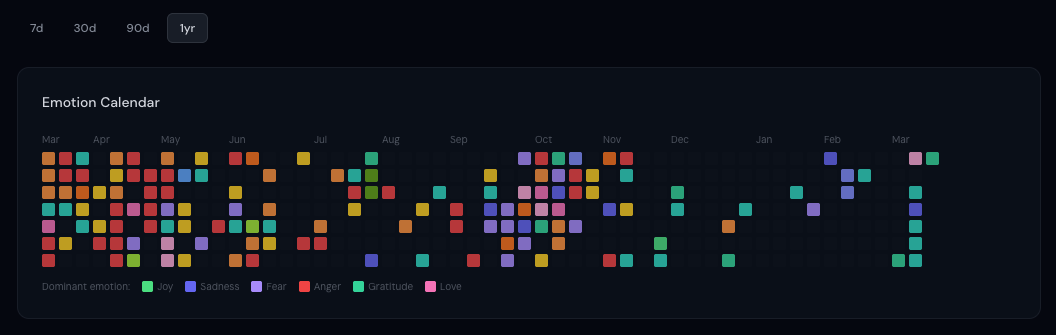

Eventually, the things that used to break you will only bend you. Your version of "hard" will rise. And when that happens, you won't need to text us every day. You'll only show up occasionally, when life gets exceptionally heavy, to check your azimuth and make sure you're still holding your ground.

Because the ultimate goal of EQ isn't to be your crutch. It’s to remind you that you are your greatest treasure.